XBUILD 2018

Overview

This event was a 24 hour hackathon that took place in Boulder, Colorado in 2018. There were sponsored problems to be solved using the sponsor’s product, but the overall hackathon had no guidelines. The prompt was, in essence, “Make a cool product.”

LIMITATIONS

The biggest limitation for this hackathon was that we only had 24 hours to solve the challenges. We also had access to a limited pool of tools and equipment for this project.

GAME PLAN

So we looked at the sponsored challenges and saw an option to bring in multiple different sponsored challenged into one single hack. We looked at a use case of a trainwreck with hazardous materials and the requirement to do rapid remote triage. This drove us to try and find an innovative way to control a drone with limited dexterity that is common with Hazmat and similar anti-exposure suits.

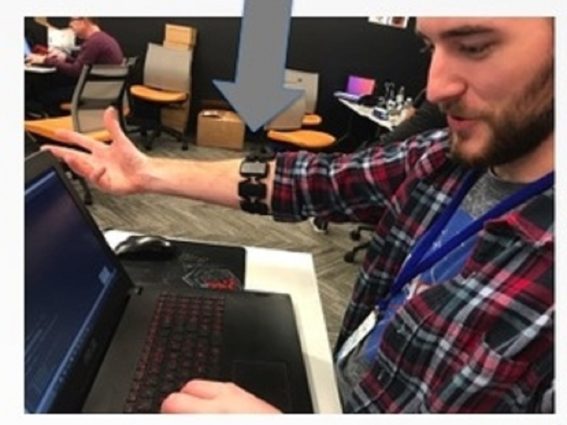

Our overall hack design was to create a platform to be equipped onto a drone that could assist medical professionals in rapid treatment of injured people. The idea is to get a (simulated) drone into the air that is connected to the internet, then fly it to a site where people may be hurt or injured, then take a picture of people there. That picture is then sent to IBM’s watson with a trained profile that determines if that person is standing, sitting, or prone. At the same time, the drones will be piloted with a Myo Armband in cases where the use of dexterous fingers is not achievable.

I personally worked on the Myo Armband and the drone simulation. The Myo has an SDK available on their website here that I used to track the tilt of the arm. I then mapped that tilt angle to a specific speed that the drone should move at. The drone simulation platform that we used was called FlytBase, one of the sponsors there. They have cloud based drone solutions, where you can send commands to cloud connected drones. I used a python script to translate the Myo tilt to a velocity, then send it to the cloud drone. I also mapped specific hand gestures that the Myo recognizes to specific drone movements. For example, I mapped a spread-fingers gesture to land/takeoff.

Our team did great, and it all came together to win us 3rd place overall! Link to the Submission!